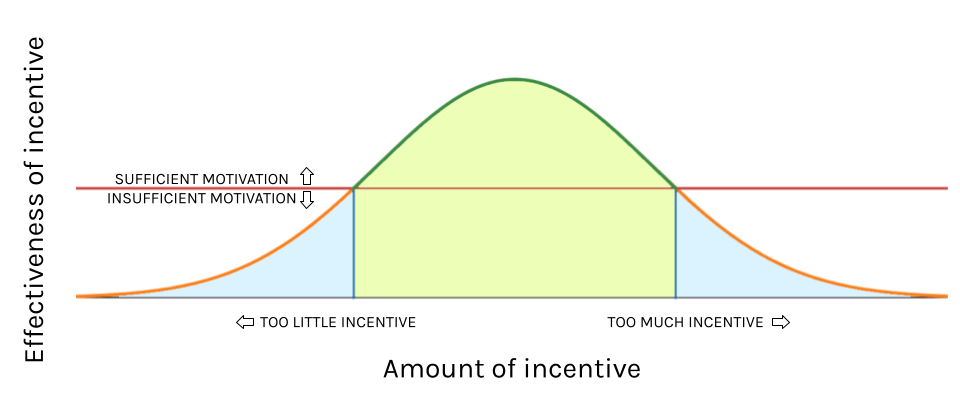

If something is incentivized too strongly, it becomes disincentivized.

Extrinsic motivation has important social utility. It can both drive prosocial behavior and inhibit antisocial behavior. In many cases, extrinsic rewards are natural. Praise is a natural response to someone doing something we want them to do, for example, as is expressing anger towards someone for doing something we don’t want them to do. A large part of human behavior is driven by the “reward center” of the brain, even things we would consider intrinsically motivating. Consideration of reward (or punishment) is also part of purely rational decision-making. In economic terms, a rational decision is one that maximizes marginal utility (roughly, “has the greatest rewards”) while minimizing marginal cost. Even in completely irrational behavior, the irrationality usually comes from misperception of costs and benefits, not from a genuine disregard for costs and benefits. Misperception like this can arise from a failure to consider possible outcomes and their relative utility. Remember that a “benefit” or “utility” can be as simple as fulfillment of a desire.

An incentive is a way of intentionally creating extrinsic motivation for a person to do a certain thing or behave in a certain way. For this post I will consider disincentives to be incentives against some particular action or behavior, so when I speak of incentives in general, I am including disincentives. Incentives include promising a child candy if they behave themselves, offering a raise to employees if they meet a certain metric, fines and punishments or violating laws or rules, and so on. Note that this is not the same as reinforcement or punishment in the sense of behavioral psychology. In positive reinforcement for example, the subject is rewarded for an action they would have done on their own. For example, if a child is rewarded with candy for being well-behaved when they were not expecting to be rewarded, then the behavior is being reinforced but it had not been incentivized. With an incentive, a person can be motivated to do something they would not otherwise do. And of course, many things can be both reinforcing and incentivizing.

So, what is the problem? It arises when the incentive is something so strongly motivating that acquiring it by any means necessary outweighs the potential costs of acquiring it “the wrong way.” For example, there are many ways in which students are incentivized to get good grades. However, if something extremely important to a student is predicated on them getting good grades (for example losing access to their phone if they don’t get straight A’s), then the student may be motivated not to focus on studying, but rather to ensure that their parents believe they are earning straight A’s. This could mean cheating on a test, lying about their grades, and so on. By diverting motivation away from genuinely performing well, the parents’ policy not only fails to incentivize earning good grades, but in fact disincentivizes the student from earning good grades.

An important question is, why might some students respond to this situation by studying harder while others respond by lying or cheating? A key factor is probability estimation. Decision making isn’t strictly about costs and benefits, but rather expected costs and benefits based on one’s own estimation of the probability of different outcomes. A student who is confident they can earn straight A’s will rarely if ever be motivated to cheat, whereas a student who believes they cannot earn straight A’s no matter how hard they work will be highly motivated to cheat. We can think about this in terms of expected value (EV). Expected value is defined as the sum of each possible outcome of an event times the probability of that outcome. It works like a long-term average of outcomes if you were to repeat the event many times.

For example, suppose you roll a pair of dice. If you roll a 7, you win $120. If you roll any other number, you lose $6. How much money would you expect to win? Is the game worth the risk? Recall that the probability of rolling a 7 with a pair of dice is 1/6 and the probability of rolling any other number is 5/6.

EV of dice game = 120*(1/6) – 6*(5/6) = 15

So the expected gain from playing the game is $15. Generally speaking, this indicates it’s rational to play the game rather than not (since doing nothing has an expected gain or loss of $0.)

Let’s return to our example with the students. Suppose that Student 1, a confident student, estimates their chances of succeeding in earning straight A’s legitimately to be 90%, while Student 2 (an unconfident student) estimates their chances at 30%. Additionally, Student 1 estimates the cost of doing the necessary studying to be 24 utils (“util” is a hypothetical unit of utility, which is not actually numerically measurable) while Student 2 estimates the cost at 36 utils. For simplicity, we will assume both students have the same probability and cost estimations for getting caught if they cheat (we’ll say a 25% chance of getting caught at a cost of 2,000 utils). The cost of putting in the effort to cheat is 60 utils. Finally, the utility of receiving the incentive, by either earning straight A’s or cheating without getting caught, is 40,000 utils.

| Student 1 (confident) | Student 2 (unconfident) | |

| EV of studying | 0.9*40,000 + 0.1*0 = 36,000 utils | 0.3*40,000 + 0.7*0 = 12,000 utils |

| Net utility of studying | 36,000 – 24 = 35,976 utils | 12,000 – 36 = 11,964 utils |

| EV of cheating | 0.75*40,000 – 0.25*800 = 29,500 utils | 0.75*40,000 – 0.25*800 = 29,500 utils |

| Net utility of cheating | 29,500 – 60 = 29,440 utils | 29,500 – 60 = 29,440 utils |

| Rational strategy | Studying | Cheating |

What’s missing from this calculation is the negative utility brought on by feelings of guilt or shame that may result from lying or cheating. For many students, pride and integrity will prevent them from cheating when it would otherwise be rational for them to do so. However, if the incentive is great enough, it can supersede even these considerations. For example, a person might do something they believe is otherwise morally wrong in order to protect their own life or the lives of others.

Moral considerations can also be hampered by distance (literally or figuratively) between the decision and the negative moral consequences or by collective decisions removing feelings of personal responsibility. These are both issues when corporations make decisions about things like worker safety and negative externalities (such as pollution).

This is also an issue in the field of AI alignment, i.e. making sure AI does things we want it to do. Some AI models are based on reward functions and thus behavior is “incentivized.” This can lead to misalignment. For example, if an AI has the goal “prevent people from dying in a fire,” then it may determine that the most effective way to reach this goal is by killing everyone with poison. In other words, in order for an incentive to be effective, there must be some component incentivizing the how, not just the what.

Going back to the example of the students, we see that there are incentives to earn A’s legitimately (the negative consequences of cheating), however these can be exceeded by the incentive to get A’s by any means necessary. In response to this problem, some parents or teachers may increase the punishment for cheating and/or try to increase the chance of getting caught when cheating. This approach has a fatal flaw: any time a new strategy for cheating is developed that is easier to get away with, the unconfident student will rationally pick that option.

The particular example of Student 1 and Student 2 is fairly unrealistic because it is only meant to illustrate the concept of expected value. In reality, though, strong incentives to get good grades is the reason for students cheating or lying about grades. If the student doesn’t care about the outcome, they will fail honestly (another thing that really happens). Typically, in order to prevent cheating, it is strongly disincentivized through severe punishment and low probability of success. However, this leads to teachers playing “whack-a-mole” as students continually find better ways to cheat. This approach can reduce cheating, but it will never solve the root problem.

If the incentive to get good grades is lowered, then it will become no longer worthwhile to cheat. Consider that cheating is rare in low-stakes situations such as a casual game of Pictionary with classmates. Additionally, we can observe that expected value for the utility of earning grades legitimately is dependent on the student’s confidence of success, and not at all on their likelihood of success. This means that, for example, a student can legitimately earn straight A’s and then still choose to cheat on a test (this also really happens).

To eliminate cheating in school, students must be both confident in their ability to succeed and at the same time willing to try and fail. To build confidence, students must be challenged at an appropriate level for each individual, such that a student perceives an assigned task as both challenging and feasible. For students to be willing to fail, the stakes of success or failure must be lowered. Unfortunately, these things are not typically under the control of individual teachers nor even individual schools, and the rational decision making I described is affected by symptoms of anxiety or depression. In particular, anxiety and depression are characterized in part by unrealistic estimations of probabilities and unrealistic estimations of the (negative) utility of different outcomes. Relative to a person’s own estimations, their decision making is still rational, however it is irrational in the sense that the evidence they have doesn’t support their estimations. This is an example of what is often called “distorted thinking” or “cognitive errors.”

These principles apply equally to other cases of incentivization, including incentivizing employee work performance. In cases of non-human entities however, like corporations or AI, things are a little different. In short, there can never be enough guardrails to reliably prevent bad actors. Even with constant oversight, overseers can be deceived or bribed. However, there are at least ways to mitigate bad outcomes. AI alignment is currently an active field of study. For corporations, giving power to workers tends to disincentivize safety violations. Smaller, local businesses are also less prone to externalizing costs since the people involved in the business are members of the community that would be harmed by negative externalities.

The worst case scenario is essentially what we have now: huge international megacorporations in which workers have very little influence. Such corporations are strongly incentivized to maximize profits, and this is frequently in conflict with incentives to follow rules and regulations. As a result, they will rationally avoid compliance when they think the chances of getting away with it are high enough and the costs of getting caught low enough. In some cases, the consequences for violating a regulation may be a fine that is less than the excess profit gained from avoiding compliance, making the violation the obviously most rational action. However, even with more severe consequences, this is similar to the example of students cheating: the incentive to maximize profit is fundamentally too high, and the best regulators can do is play “whack-a-mole.”