The concept of the technological singularity is a confused one.

Here’s the thing about “general AI” or “self-aware AI”—it will be indistinguishable from really good mimicry of self-aware intelligence. When the singularity happens, we won’t notice.

This is an excerpt of a YouTube comment on a video about AI technology. I thought about responding to this comment, but I decided to do a blog post instead. I have more to say about this than can reasonably fit in a YouTube comment, and I also realized that I don’t particularly care about communicating with this person specifically.

My issue with this comment is that it conflates AGI or AI self-awareness with the singularity. This is something I’ve seen implied many times before, and I think these concepts are muddled.

What is the singularity?

The technological singularity is a hypothetical point in time when technological advancement becomes so rapid that humanity is fundamentally changed as a species in ways we can’t predict. Many proponents of this hypothesis posit self-modifying AI as the engine driving this advancement. Note that we already have self-modifying AI, but so far AI is not as good at engineering AI systems as humans. This creates a negative feedback loop where, if human-created AI continually modifies itself without human intervention, it will eventually start to get worse and worse until it breaks. The idea is that, once AI is better at creating AI than humans, it will create a positive feedback loop where the AI gets better and better until it reaches some kind of physical limitation on its improvement. Note the similarity to Moore’s Law, which states (roughly) that computing power doubles every few years.

What is a singularity?

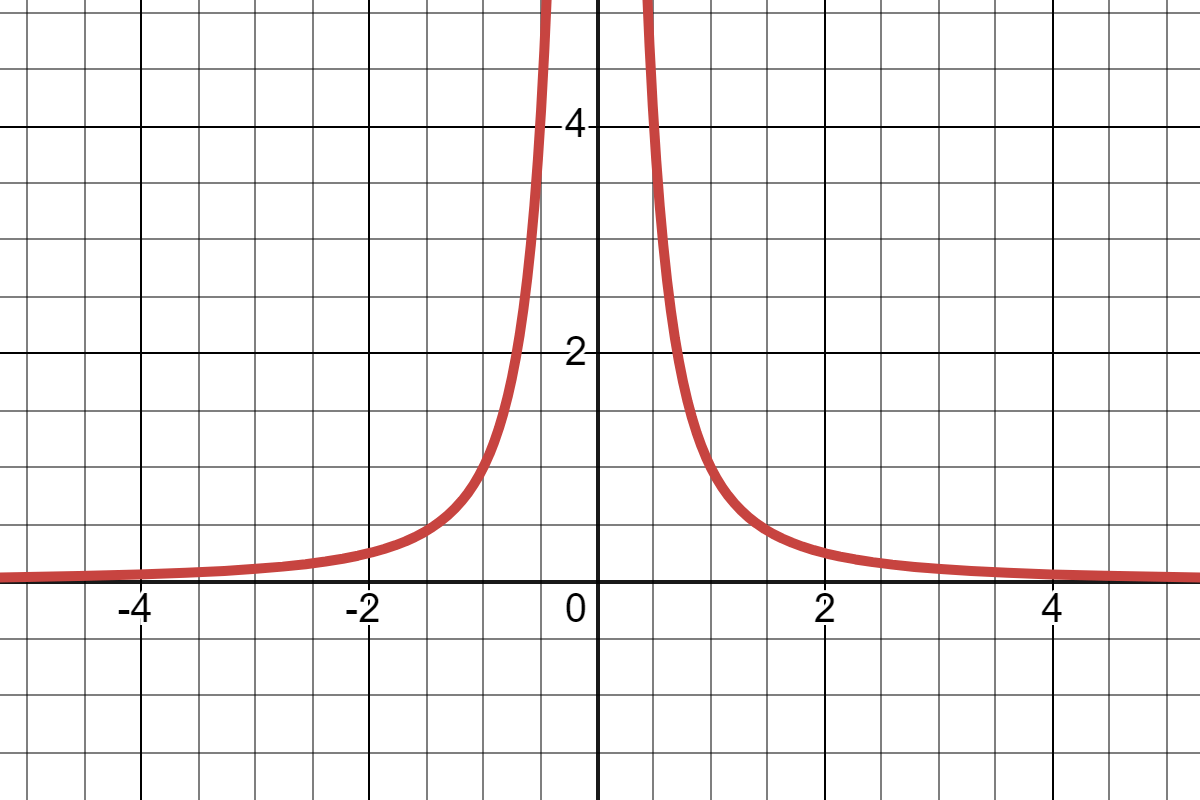

The term singularity is a math analogy. In math, a singularity is a kind of point where a function misbehaves. The key to the name is that it is a singular point, and not an entire region of the graph. What do I mean by misbehave? Mostly, this refers to having a discontinuity– a place where the function is not defined. In particular, you might know “singularity” from high school math as a non-removable discontinuity of a rational function; this would be a point where the graph has a vertical asymptote.

For example, the function 1/x2 has a singularity at x=0.

In this case, the graph as it approaches the singularity from the left exhibits hyperbolic growth.

“Singularity” is a misnomer

The technological singularity is named after this kind of hyperbolic growth, but when discussing it we talked about positive feedback loops and repeated doubling– things that grow exponentially. Hyperbolic growth and exponential growth are both “fast” so they may seem similar, but there are some important differences. Exponential functions approach infinity as x approaches infinity, while a rational function with a singularity approaches infinity at some finite x value. Informally, you could think of this as the exponential function taking an infinite amount of time to “reach infinity” while the rational function “reaches infinity” in a finite amount of time.

The idea of the singularity is concordant with “reaching infinity” in that, hypothetically, technology will become so advanced that we won’t even think of technological advancement in the same way. The singularity is supposed to be transformational (or even transcendent). However, there is no mathematical basis for describing it this way. Even supporters of the singularity hypothesis argue that technology is growing only exponentially. “Only exponentially” seems like a strange thing to say if you know how fast exponential growth is, which is why I think it’s important to establish just how different these functions are. To say that hyperbolic growth is faster than exponential growth is such a massive understatement that it’s nearly nonsensical to say at all. The difference isn’t night and day, it’s the darkest depths of space and the surface of the sun.

Unfortunately, the singularity seems to be a confused (perhaps dead) metaphor. Vernor Vinge, who is credited with popularizing the term, seemed to think it had to do with the singularity in a black hole, but (with all due respect) I think this is a sci-fi author’s misinterpretation.

When this happens, human history will have reached a kind of singularity, an intellectual transition as impenetrable as the knotted space-time at the center of a black hole, and the world will pass far beyond our understanding.

…

Falling into the singularity is admittedly a frightening thing, but now we might regard ourselves as caterpillars who will soon be butterflies and, when we look to the stars, take that vast silence as evidence of other races already transformed.

Vinge (1983), OMNI Magazine

As you can see, the analogy is pretty opaque here. There seems to be something about the singularity being an inevitability we are accelerating towards, but even that is reading into it a bit. Vinge supposedly heard the term singularity in a paper by Stanisław Ulam about fellow mathematician John von Neumann. (Von Neumann may have originated the term.)

One conversation centered on the ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.

Ulam (1958), John von Neumann, 1903-1957

This is much more clearly a description of a mathematical singularity, especially the use of the phrase essential singularity. The singularity in physics is also derived from the mathematical sense of the term. See also Wikipedia – Technological singularity.

The matter at hand

All of this is to say that yes, if the singularity happens then we will notice it. The whole premise of the hypothesis is that we are already on the growth curve. The singularity as an event in time is not when growth begins, nor when it begins accelerating arbitrarily quickly (a turning point), but rather when it explodes. Self-aware AI could be a stepping stone on the way to the singularity (or even a result of the singularity), but they’re not at all the same thing.

Of course, this is beside the point the commenter was actually trying to make, which is that true self-awareness and a good mimicry of self-awareness are indistinguishable. They meant to say when AI becomes self-aware we won’t notice. This is a widely held view, but there is some confusion regarding the terminology here too. For one, the commenter conflates AGI (aka general AI) with self-aware AI. There are other terms that frequently get tossed around as more or less equivalent, including sentience, sapience, and consciousness.

Thought and perception in AI and in organisms

First of all, “AI” is itself a vague, informal term. It can refer to any computer system that performs some sort of “decision making”. This includes both very advanced and very simplistic systems. “AGI” is a hypothetical, also informal, term. The idea is that past and present AI (and computer systems generally) are highly specialized and have narrow ranges for their parameters. Computers are bad at, for example, solving novel problems they’ve never seen before. While computers are very good at certain types of tasks, they lack the flexibility of the human brain. In science fiction, we often see a person ask a computer to do something where the computer has to figure out how to accomplish that task, and the computer may engage in human-like reasoning. This is the dream of AGI. It is as of yet unknown whether such technology is even possible. Even with recent AI breakthroughs, AGI is not really even on the horizon.

Other terms, which describe cognitive faculties more generally, are frequently applied to living things. Sentience is the most basic of these, referring to something that has sensory perception. Most (if not all) animals are sentient, as well as possibly other kinds of organisms. When it comes to AI, it is an open question whether sentience is possible at all. While AI can receive information from the outside world such as through video and audio, it’s not clear when and if these things would count as senses. This gets into the idea of qualia, which I won’t go into detail on here. The point is that sentience is a very specific kind of faculty that does not necessarily require, nor is required by, having other advanced capabilities.

Awareness and consciousness are similar to sentience, but imply some sort of active component like executive functioning. For example, some may say that plants are sentient but not conscious. Getting gradually more complex, self-awareness or self-consciousness entails the capacity to identify oneself as an individual and differentiate between the self and the outside world. It may also involve identifying others as individuals. It is at this “level” that people often start to think of animals as making decisions rather than acting on pure instinct. This includes animals like chimpanzees and dolphins, and possibly many other mammals depending on whom you ask.

Finally, sapience (literally “wisdom”) refers to having human-like intelligence, for example complex linguistic communication, long-term planning, abstract reasoning, and so on. While the most intelligent animals are sometimes considered on the verge of sapience, no known organism is truly sapient except for humans. This is more like when we meet intelligent aliens in sci-fi.

Part of the problem with using these terms to describe AI is that computers and living things just have very different capabilities. We’ve seen that language– once considered a hallmark of sapience –can be virtually mastered (no pun intended) by systems that are a far cry from self-awareness.

The vague question we are really trying to get at is, “Can computers ever have human-like experiences?” Realistically I think the answer is probably no, but I also think this is probably not the right question to be asking, at least partly because it might be impossible to tell one way or the other. This is essentially what the commenter was getting at, but to reiterate, any future AGI may or may not have “self-awareness”.

Concluding thoughts

I think it’s a problem in contemporary discourse on AI that people use many of these words interchangeably. It’s made even more difficult by the fact that these are informal terms to begin with. Some terms can be misleading, for example “neural net” suggests more similarity with the human brain than actually exists. Much of the language around computers is metaphor and analogy, including “the singularity” as we saw, and these metaphors can get confused.

Many people seem to have a kind of sci-fi framework for conceptualizing the future of AI, which is almost certainly inaccurate. There’s also a conflation of technological questions which may someday be answered with philosophical questions that are likely never to be answered (because we haven’t managed to answer them as they pertain to humans or animals).

To sum up, I actually pretty much agree with the comment I quoted at the very beginning, but I think it reflects a lot of confusion regarding the underlying concepts. The main idea of the comment is itself a kind of banal observation. People have been thinking about this since Alan Turing, if not earlier.

Further reading:

Wonderful post