The Second Law of Thermodynamics is one of the most misunderstood scientific laws. Stated briefly, the law says that entropy increases over time in an isolated system. The misunderstanding can come partly from not knowing what an isolated system is, but mostly comes from not knowing what entropy is.

What is entropy?

First, entropy is not a measurement of disorder. In plain English terms, entropy is a measurement of available useful energy. So, in order to really understand entropy, we need to understand energy.

What is energy and when is it useful?

Energy is an abstract concept in physics. I like Richard Feynman’s explanation of it which I will paraphrase below.

Imagine that you are the mother of a small child. In the child’s room, there is a toy chest filled with 30 toy blocks. Every day, you have to find all the blocks and put them back into the chest. You know there are always 30 blocks; they can’t disappear and more can’t appear. Blocks may be hidden all over the place: under the covers on the bed, in the bathtub, in drawers, and so on. You know the exact dimensions and mass of each of the blocks and have a variety of scientific tools for identifying where and how many of the blocks there are. You may find for example that the contents of the sock drawer weighs 200 g more than it should if it just contains socks, or that the water level in the bathtub is 3 cm higher than it should be if it just had the bath water in it.

So you have completely different measurements that represent basically the same thing: blocks in the drawer, blocks in the bathtub, and so on. Now, what if there never were any actual blocks? You only have these indirect measurements that tell you where “blocks” are, and you know there are always 30 of them, but you never have the blocks themselves. Every day the “blocks” can be in different places, but all your different measurements always tell you there are 30 total.

This is how energy works in an isolated system, i.e., a system in which energy never enters or leaves. There is no such thing as just “energy,” only different forms of energy that we can measure in different ways. The form of energy can change, but there is always the same total amount. Forms of energy include: motion, heat, light, sound, electricity, mass, potential energy from chemical reactions, potential energy from a compressed spring, and potential energy from gravity.

Energy is useful when it can do work, which is, roughly speaking, pushing something to make it move. Energy is able to do work when there is an energy gradient, that is, an area where there is a lot of energy next to an area where there is little energy.

A ball sitting stationary on the ground does not have much energy. A ball sitting at a high elevation has high potential energy, but if it is sitting stationary on a flat surface, then it can’t do any work. On the other hand, a ball sitting stationary at the top of a tall slope can do work, namely by rolling down the slope. This is because the sloped surface creates an energy gradient.

Other examples of energy gradients include something hot next to something cold, something moving fast next to something standing still, and so on. Note that, for example, in order for a temperature difference to do work, the temperature has to equalize, so that there is no longer a gradient and no additional work can be done. The same amount of energy is “spread out evenly” and this is a state of higher entropy.

So what precisely is entropy?

Entropy S is measured in units of joules per kelvin (energy divided by temperature). It is only meaningful when discussing a change in entropy ΔS, not as an absolute quantity. There is no realistic minimum or maximum entropy for a given system. What entropy is useful for is analyzing how a thermodynamic system changes when some specific process takes place. If an (ideal) process does not increase total entropy, then it is called reversible. Of course, no real-world process is ideal due to imperfect efficiency. The amount by which a process increases entropy determines how much additional energy is required in order to reverse the process. This is the energy that is “lost” from inefficiency.

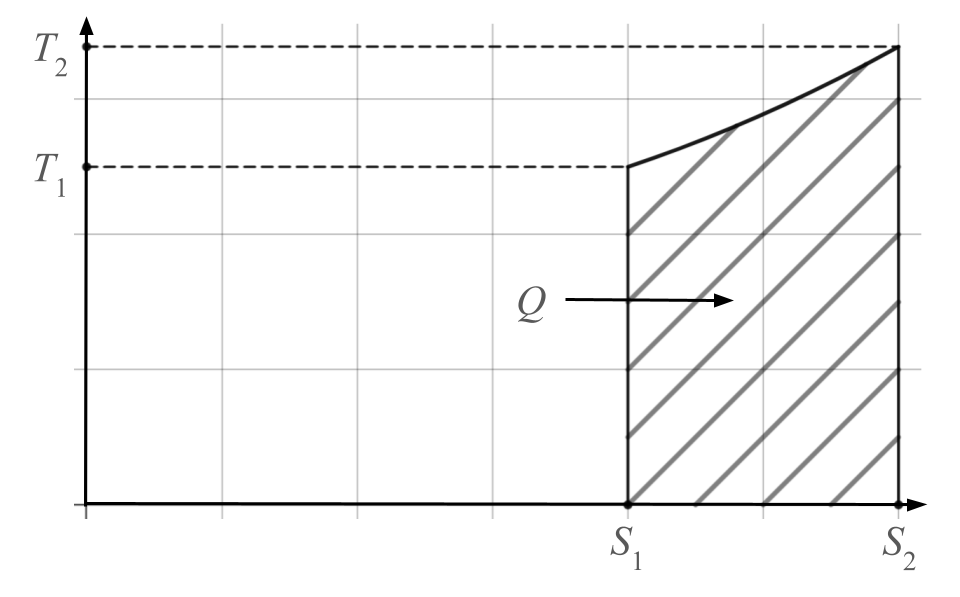

Let’s look at a simple (and highly idealized) example, the isobaric expansion of a heated gas. Charles’ gas law states that, at a given pressure, the volume of a gas is proportional to its temperature. This means that a change in temperature is proportional to a change in volume if pressure is held constant. The change in entropy when this happens is given by:

ΔS = n log(T2/T1)

Where n is the number of moles of gas (the quantity of gas particles) and T1 and T2 are the initial and final temperatures, respectively. So 10 mol of an ideal gas going from 290 K (kelvin) to 490 K and expanding isobarically would experience an increase of entropy of approximately 5.2 J/K (joules per kelvin).

Entropy compared to disorder

One interpretation of entropy is that it represents a quantity of microstates corresponding to a single macrostate. A microstate of a system is where individual molecules are located and how fast they’re each moving. A macrostate consists of the “big picture” measurements like temperature and pressure. This is in line with a statistical approach to thermodynamics.

An analogy

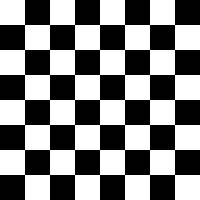

Think about an image made up of pixels. The microstate is the specific color of each individual pixel, and the macrostate is the overall image those pixels represent. There are only a couple microstates that can produce a low-resolution, pixel-perfect black and white chessboard, making this a “low entropy” image.

However, a high-resolution photograph of a chessboard with noise (such as film grain) can tolerate small differences in pixel color, making it a “high entropy” image.

A thought experiment

Think of a container filled with hot gas and cold gas. Among all the possible positions of the individual gas particles, very few of them have all the hot gas on the left side and all the cold gas on the right side. Most of the microstates involve the two being mixed up together. Hence, a situation in which hot gas and cold gas are separated is a lower entropy macrostate than having the two mixed up.

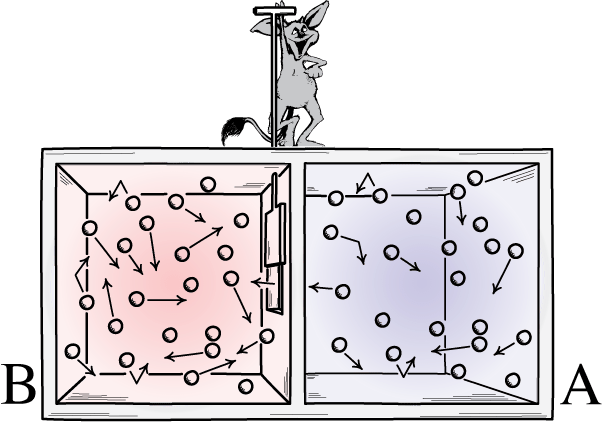

There is a thought experiment we can do with this, called Maxwell’s demon. Imagine we have a container with hot and cold gas, and in the center there is a partition which can be opened for closed to allow gas to go through to the other side. Assume this is an isolated system. If the partition is initially closed and the hot and cold gas separated, this is a low-entropy state. When the partition is opened, entropy will increase as the gas begins mixing until it is in thermal equilibrium. However, even at this point individual gas molecules will be moving at different speeds. Temperature has to do with the average speed of molecules. What if some clever demon could rapidly open and close the partition to only allow fast molecules to pass from right to left and only slow molecules to pass from left to right? Eventually the left side would be dominated by fast-moving (hot) gas and the right side would be dominated by slow-moving (cold) gas, thus entropy would have been reversed in an isolated system. Such a situation would violate the Second Law!

The solution to this conundrum is that physically opening and closing the partition would require energy, which means this is not in fact an isolated system: energy is being added. Additionally, the motion of the partition would increase entropy in the system and its surrounding environment via friction. So in reality, such a setup is not possible. Decreasing entropy in a system requires an input of energy.

How entropy is unlike disorder

It is important to note that of the two examples above, only the hot and cold gas actually involves entropy. Let’s consider another example of a process: taking clothes from a dresser and throwing them on the floor. This does increase entropy, but only because clothes in the floor have lower gravitational potential energy than clothes held in drawers in a tall dresser. Consider, alternatively, a bunch of articles of clothing folded neatly and laid out on the floor which are then thrown into a messy pile. This has actually decreased the entropy of the system, since the clothes on top of the pile now have greater gravitational potential energy. The pile of clothes could potentially do work by toppling over and pushing something, whereas they could do no work when folded on the ground.

For another example, consider books on a bookshelf: they can be in order (e.g. alphabetically by author name) or they can be disordered (i.e. in some random order). Both of these states have the same amount of entropy; the energy gradient has not changed.

Entropy and disorder are therefore not the same thing, although they are similar concepts.

What is disorder?

First, we need to identify what order is. Order is subjective to humans. Going back to the book example, there is nothing physically different about a book by Aristotle as compared to a book by Žižek. The ordering is abstract on the basis of things about the books that are meaningful to humans.

With this in mind, we can still think about order and disorder in terms of microstates and macrostates. There is generally only one way to arrange the books alphabetically by author’s last name, while there are many ways to arrange the books so that the order is not meaningful. Statistically, any change to an ordered system like this is prone to lead towards a disordered state, simply because there are more disordered states. However, this is not a physical law, it is not entropy, and above all else it is not the Second Law of Thermodynamics.